The world will move towards decentralized identity if we make it easy for them to do so—and easy means, above all, fast. The solution is machine readable governance—a smart way of implementing rules for how to manage trust.

If you want a high-speed train to go fast, you need the right kind of track. It needs to be laser-straight, have few, if any, crossings, and be free of slower freight trains. Unfortunately, the U.S. has, mostly, the wrong kind of rails: lots of crossings, lots of freight trains, and lots of curvy and unaligned tracks. One section of the Northeast Corridor can’t handle train speeds above 25mph. And while billions will soon be spent on new high-speed trains that are lighter, more capacious, and more energy efficient, they will still run on the same rails at the same speeds.

As we race ahead with decentralized identity networks—Ontario’s announcement of its Digital ID program is the most visible sign yet that we are in an accelerating phase of a paradigm shift on identity—we face lots of infrastructural choices, the answers to which could put us in an Amtrak-like bind.

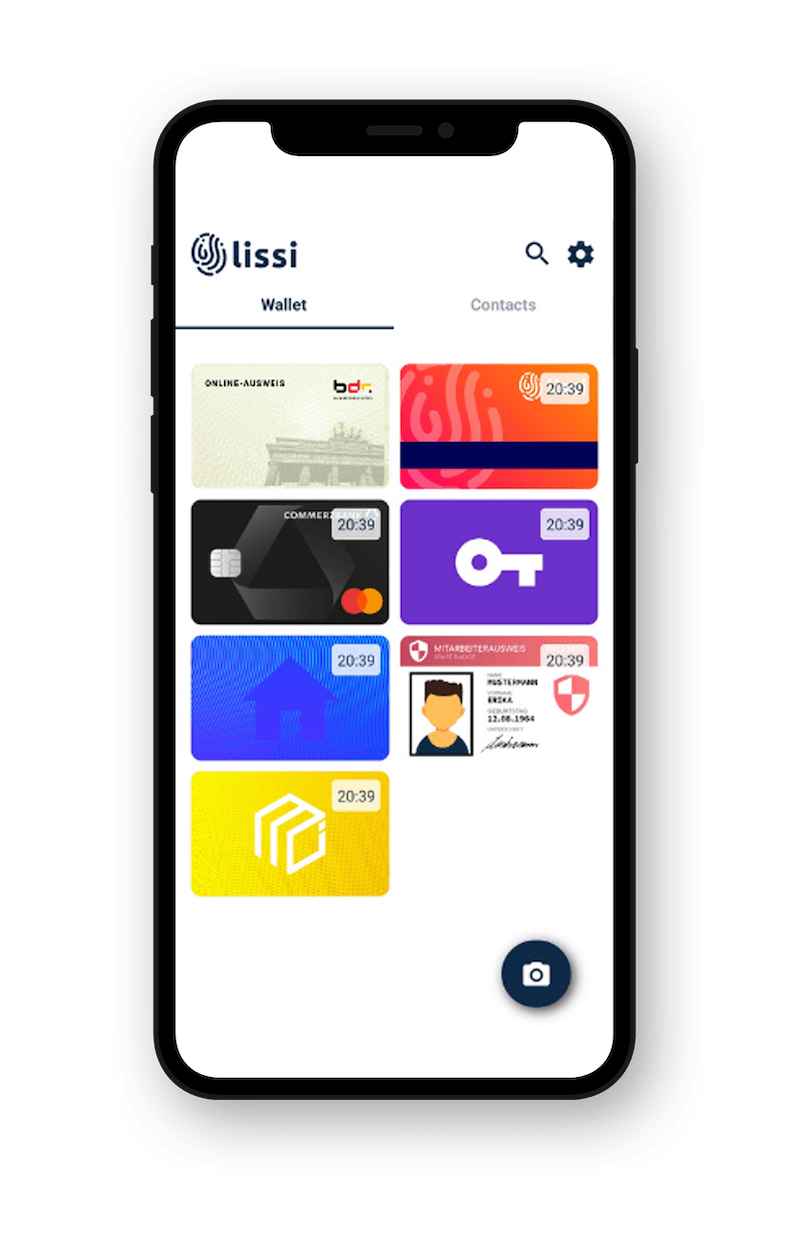

If you think of a decentralized identity network as a set of rails that allow information to be issued, shared, and verified, this process should be as frictionless and fast as possible; and it is, because it is powered by software—called agents— that enable consent and trust at every point in the system. Once you decide that an issuer of a verifiable credential is trustworthy, verifying their credentials is straightforward. You can also apply all kinds of rules at the agent level to govern more complex information requirements in a frictionless, automatic way. A verifier agent could be programmed to accept only certain kinds of tests from a laboratory, or only tests from approved laboratories at a national or international level. The ability to do this instantaneously is essential to adoption.

This is why machine-readable governance, which takes place at the agent layer, is integral to the successful deployment of any kind of decentralized trusted data ecosystem: It’s a real-time way to handle governance decisions—the Boolean choreography of ‘if this, then that’— in the most frictionless way possible. This also means that a network can organize itself and respond as locally as possible to the constant flux of life and changes in information and rules. Not everybody wants the same thing or the same thing forever.

Machine-readable governance therefore functions as a trust registry—literally a registry of who to trust as issuers and verifiers of credentials—and as a set of rules as to how information should be combined, and for whom, and in which order. It can also power graphs—sets of connections—between multiple registries. This means that different authority structures can conform to existing hierarchical governance structures—or to self-organize. Some entities may publish their ‘recipe’ for interaction including requirements for verification, while others may simply refer to other published governance. When everyone knows each other’s requirements, we can calibrate machine-readable governance to satisfy everyone’s needs in the most efficient way possible.

Choreographing this complex workflow at the agent level delivers the speed needed by users.

The elements of machine-readable governance

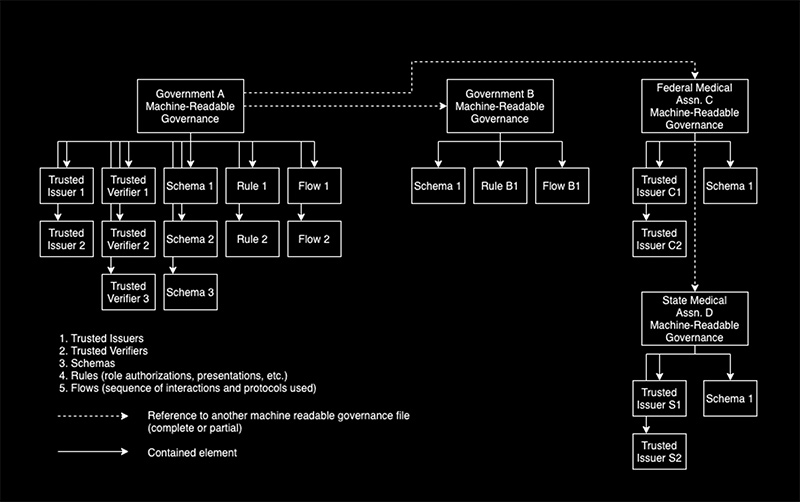

Machine-readable governance is composed of elements that help to establish trust and enable interoperability: trusted participants, schemas (templates for structuring information in a credential), and rules and flows for presenting credentials and verifying them. Machine-readable governance can be hierarchical. Once a governance system is published, other organizations can adopt and then amend or extend the provided system.

In the diagram above, Government A has published a complete set of governance rules.

Government B selected Schema 1 for use and added its own rule and flow to the governance from Government A.

Federal Medical Assn. C created its own list of trusted issuers (C1, C2), selected Schema 1 for use, and layered customized governance on top of the governance that Government A publishes.

State Medical Assn. D has taken the layered governance selected by Federal Medical Assn. C and duplicated everything except its list of issuers.

If we have this fantastic, high-speed way to verify in decentralized networks where, then, is the Amtrak problem?

It lies in the belief that the best way to do governance is to divert all traffic through a centralized trust registry. This trust registry will be run by a separate organization, third party business, or consortium which will decide on who is a trusted issuer of credentials—and all parties will consult this single source of trust.

Here’s why this isn’t a good idea:

First, the point of high-speed rails is speed. If you must ping the trust registry for every look up, then you have created a speed limit on how fast verification can take place. It will slow down everything.

Second, a trust registry creates a dependence on real-time calling when the system needs to be able to function offline. A downloadable machine-readable governance file allows pre-caching, which means no dependence on spotty connectivity. Given that we want interoperable credentials, it’s a little bit naïve and first-world-ish to assume the connection to the trust registry will always be on.

Third, a centralized trust registry is unlikely to be free or even low cost, based on non-decentralized real-world examples. Being centralized it gets to act as a monopolist in its domain, until it needs to interact with another domain and another trust registry. Before you know it, we will need a trust registry of trust registries. With each layer of bureaucracy, the system gets slower and more unwieldy and more expensive.

This kind of centralized planning is likely to only benefit the trust registry and not the consumer. And it can all be avoided if governments and entities just publish their rules. The kicker is that as the trust registries currently envisioned cannot yet accommodate rules for choreographing presentation and verification, it’s literally a case of ripping up the high-speed track and replacing it with slower rails.

Yes, the analogy with Amtrak isn’t exact. The tracks that crisscross the U.S. are legacy tech while decentralized identity is entirely new infrastructure. But trust registries are an example of legacy thinking, of bolting on structures conceived for different systems and different infrastructural capacities.

We can, with machine-readable governance, have smart trust registries that map to the way governments, local, federal, and global, actually make decisions and create rules.

We also move further away from a model of trust that depends on single, centralized sources of truth, and toward zero trust-driven systems that enable fractional trust—lots of inputs from lots of sources that add up to more secure decision making.

But most of all, we use the rails we’ve built to share information in a secure, privacy-preserving way in the fastest, most efficient way possible.